Prompt Engineering 101: Foundations for Better AI Results

Apply the Gartner ReFLECT framework and build the skills needed to improve your prompting over time.

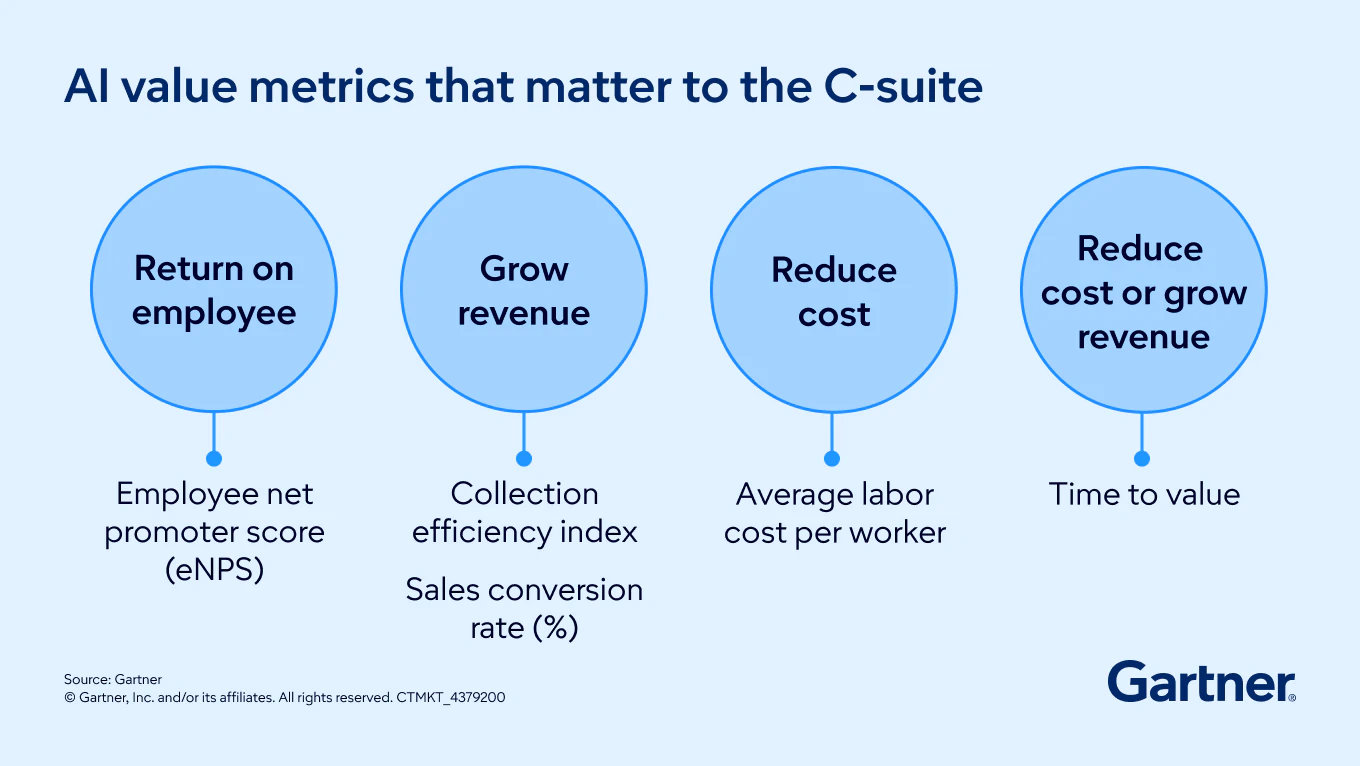

Unlock ROI from AI

Build the bridge between AI hype, productivity gains and real business value.

Experience the big ideas and actionable insights to harness your full potential.

Join Gartner analysts to dive deeper on trends and topics that matter to business leaders.

Drive stronger performance on your mission-critical priorities.